Deep Learning

Deep learning is a subset of machine learning that uses multi-layered artificial neural networks to identify patterns in raw data. By stacking these layers, the system automatically learns to categorize information like images and speech, eliminating the need for human experts to manually define rules or features.

Key Takeaways

- Deep learning relies on artificial neural networks that mirror the structure of biological neurons to process and refine information across multiple layers.

- The “deep” in the name refers to the use of many intermediate or hidden layers, which allow the model to learn increasingly complex features from raw, unstructured data.

- Unlike traditional machine learning, these models automatically discover the best way to represent data, removing the need for human experts to manually hand-code rules.

- Deep learning is the only technology that scales linearly with data and computation; the more information and processing power you provide, the more accurate the system becomes.

- The “intelligence” of a model is built through a cycle of a Forward Pass (the guess) and Backpropagation (the correction), powered by specialized hardware like NVIDIA GPUs or Google TPUs.

- This technology underpins nearly all modern AI breakthroughs, from Self-Driving Vehicles and Medical Diagnostics to generative systems like ChatGPT and Midjourney.

What Is Deep Learning?

To truly define deep learning, one must look at it as a mathematical evolution of the way we process information. It is a subset of machine learning, but it removes the bottleneck of human intervention. In older versions of Artificial Intelligence (AI), a human had to tell the computer exactly what features to look for in a photo, e.g. the shape of a nose or the curve of an ear. Deep learning changed this by allowing the machine to discover those features on its own.

This is achieved through a structure known as an artificial neural network. When we describe a model as “deep,” we are talking about the credit assignment path, or the number of transformations the data goes through before a result is reached.

Since the mid-2010s, this approach has moved from academic research into the backbone of global industry. It is the reason your phone can organize your photos by the people in them and why researchers can now predict protein folding in seconds rather than years.

Understand Deep Learning Algorithms

A deep learning algorithm is essentially a massive, adjustable mathematical function. It operates on the principle of representation learning. Instead of being given a set of rules, the algorithm is given a goal, such as identifying a “stop sign” in a video feed, and a massive pile of examples.

These algorithms rely on something called weights and biases. Every connection between the artificial neurons in the system has a numerical value that determines how much influence one piece of data has on the next.

During the early stages of training, these values are essentially random. The algorithm is “blind.” As it processes more data, it uses an optimization function, often Stochastic Gradient Descent, to slowly tweak those numbers. This is not a fast process; it requires the algorithm to fail millions of times before it begins to see the “signal” within the “noise” of the data.

The algorithm is constantly trying to reach the global minimum of a loss function, which is the point where its predictions are as close to reality as mathematically possible.

Why Deep Learning Matters Today

We have reached a point in history where the world produces more data than humans can ever hope to categorize. This is the “Big Data” era. Traditional machine learning models have a fatal flaw: they eventually stop getting smarter. No matter how much data you give a standard algorithm, its performance eventually plateaus.

Deep learning is different. It is arguably the only technology that scales linearly with data and computation. The more information you feed it, and the more powerful the processors you use, the more accurate it becomes.

This is why it is essential today. In fields like genomics, deep learning is the only way to sift through three billion DNA base pairs to find a single mutation. In finance, it is the only way to analyze millions of global trades per second to prevent a market collapse. It has moved beyond being a “feature” and has become the primary infrastructure for the modern digital economy.

How Deep Learning Works

The inner workings of a deep learning model are often compared to a “black box” because of their complexity, but the mechanical process is actually very logical. It is a series of nested equations that filter information through various stages of abstraction.

Neural Networks in Simple Words

At the simplest level, a neural network is a web of “nodes” that pass signals to one another. Imagine a line of people where each person is only allowed to answer “yes” or “no” to a very specific question. The first person might ask, “Is there a red pixel here?” If the answer is yes, they pass a signal to the next person. The next person might ask, “Are there several red pixels in a circle?”

By the time the signal reaches the end of the line, the final person can confidently say, “This is a picture of a tomato.” In a computer, these “people” are artificial neurons, and the questions are activation functions like ReLU (Rectified Linear Unit) or Sigmoid.

Layers Explained (Input, Hidden, Output)

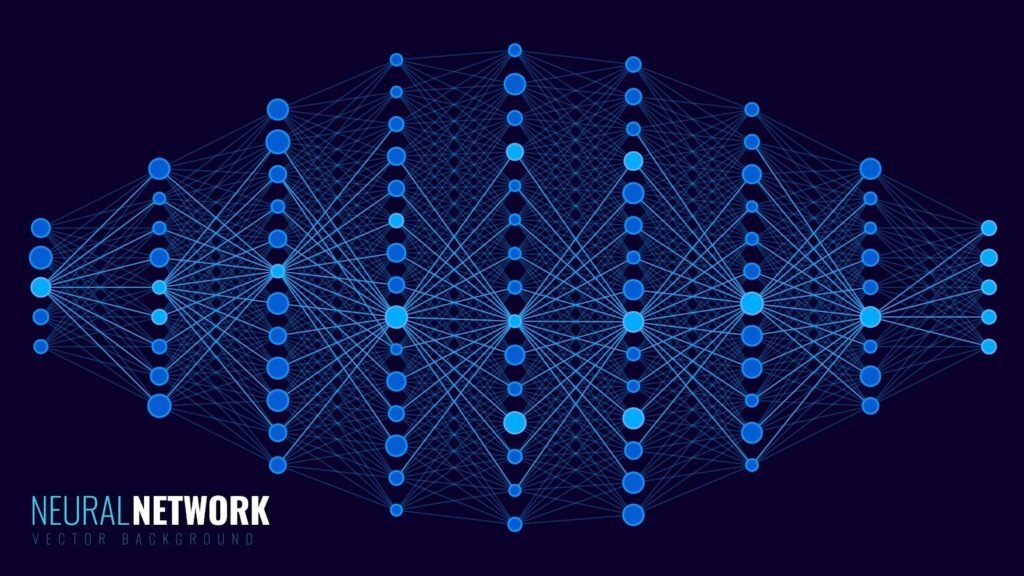

A deep learning model is always organized into a specific hierarchy of layers, and the number of layers determines the “depth” of the network.

The Input Layer: This is where the raw data enters. If you are training a model to read handwriting, each node in this layer corresponds to a single pixel of the image. The input layer does no calculation; it simply holds the data and passes it forward.

The Hidden Layers: This is where the deep part of deep learning happens. Most modern models have dozens or even hundreds of hidden layers. Each layer takes the output of the previous one and performs a new mathematical transformation on it.

- The first hidden layers might find edges.

- The middle hidden layers might find textures or patterns.

- The final hidden layers might find complex parts, like an eye or a wheel.

The Output Layer: This is the final destination. This layer provides the final classification or prediction. If the model is trying to identify an animal, the output layer will have a list of probabilities, such as 98% Cat, 1% Dog, 1% Bird.

How Models Learn (Training and Data)

Training a model is the most resource-intensive part of the process. It requires a Ground Truth, which is a dataset where humans have already labeled the correct answers.

The model performs a Forward Pass, where it takes an input and pushes it through all the layers to see what output it gets. Because it is untrained, the output is usually wrong. This is where the importance of data comes in. Without millions of high-quality examples, the model can never find the patterns it needs to succeed.

To handle this, developers use specialized hardware, that are:

- GPUs: Originally made for video games, these are perfect for deep learning because they can do thousands of small math problems at the same time.

- TPUs: Google’s custom-built chips designed specifically to speed up the training of massive neural networks.

What Is Backpropagation?

If the forward pass is the guess, backpropagation is the lesson. It is the mathematical backbone of all deep learning. Once the model makes a mistake, it calculates the loss—the difference between its guess and the correct label.

Backpropagation then works backward from the output layer to the input layer. It uses the Chain Rule from calculus to determine exactly how much each weight in the network contributed to the error.

It is a process of tiny adjustments. The model doesn’t just fix the mistake in one go. Instead, it moves the weights by a very small amount—known as the Learning Rate. If the learning rate is too high, the model might miss the correct answer entirely. If it is too low, the model will take months to learn anything useful. This delicate balance of backpropagation and gradient descent is what eventually turns a pile of code into a smart system.

Deep Learning vs Machine Learning vs Artificial Intelligence

In the professional world of data science, these terms are often used interchangeably by marketers, but for an engineer or strategist, they represent distinct layers of complexity. You can visualize this as a set of nesting dolls, where each category is a specialized version of the one before it.

Artificial Intelligence (AI) is the broadest possible container. It refers to any computer system capable of performing a task that would normally require human intelligence, such as logical reasoning or planning. This includes Old AI like the rule-based systems used in the 1980s that followed strict if-then commands.

Machine Learning (ML) is a subset of AI. It represents the shift from telling the computer what to do to showing the computer what to do. ML uses statistical methods to enable machines to improve at a task with experience. However, traditional ML has a significant limitation: Feature Engineering. A human expert must still manually identify the most important data points (features) for the model to analyze.

Deep Learning (DL) is the most specialized subset of Machine Learning. It eliminates the need for manual feature engineering. By using multi-layered neural networks, the system discovers the features on its own. While a standard ML model might need a human to define what a “wheel” looks like to identify a car, a Deep Learning model simply looks at millions of cars and figures out the concept of a wheel automatically.

Real-World Applications of Deep Learning

Deep learning has moved from the laboratory into the core infrastructure of our daily lives. Its ability to process unstructured data at a scale humans cannot match has created multi-billion dollar industries.

Image Recognition

This is perhaps the most visible success of deep learning. It powers everything from the FaceID on your smartphone to the automated sorting of medical images. In oncology, deep learning models can now detect early-stage tumors in MRI scans with an accuracy rate that rivals the world’s top radiologists. The system doesn’t just see a picture; it analyzes the mathematical relationship between every pixel to find anomalies invisible to the human eye.

Natural Language Processing (NLP)

Natural Language Processing (NLP) is the technology that allows machines to understand and generate human language. Before deep learning, translation tools like Google Translate were clunky and literal. Today, models use embeddings to understand the context and sentiment behind words. This allows for real-time translation that captures nuances, slang, and technical jargon across hundreds of languages simultaneously.

Self-Driving Cars

Autonomous vehicles rely on a sensor fusion powered by deep learning. The car uses Computer Vision to identify pedestrians, traffic lights, and other vehicles. Simultaneously, it uses deep learning to predict the behavior of those objects—calculating the probability that a child on a sidewalk might run into the street. Companies like Tesla and Waymo use massive neural networks to process petabytes of driving data every day to refine these split-second decisions.

Recommendation Systems

Every time you open Netflix, Amazon, or TikTok, a deep learning model is working in the background. These systems go beyond “people who bought X also bought Y.” They analyze your specific viewing habits, the time of day you browse, and even the speed at which you scroll to build a multi-dimensional profile of your interests, ensuring that the content you see is mathematically tuned to keep you engaged.

Generative AI

This is the newest and most disruptive application. Models like ChatGPT, Claude, and Midjourney use deep learning to create entirely new content. By learning the underlying structure of human language or artistic styles, these models don’t just search for an answer; they synthesize information to generate unique essays, computer code, or hyper-realistic images from a simple text prompt.

Types of Deep Learning Models

Selecting the right architecture is the most important decision a data scientist makes. While all deep learning relies on neurons and layers, the wiring of those layers changes based on whether the machine needs to see a pixel, hear a phoneme, or predict a sequence.

Convolutional Neural Networks (CNNs)

If you are building a system that processes visual information, you are almost certainly using a Convolutional Neural Network. Inspired by the biological visual cortex, CNNs do not look at an entire image as a single flat file. Instead, they use a mathematical operation called a convolution to scan the image in small, overlapping sections.

The magic of a CNN lies in its filters. Imagine a small window sliding across a photo of a forest. One filter might only be sensitive to vertical lines (tree trunks), while another looks for specific green hues (leaves).

To save on computing power, CNNs use pooling to shrink the data size while keeping the most important features. This makes the model translation invariant, meaning it can recognize a cat whether it is in the top-left corner or the bottom-right of the frame.

This is the tech behind Google Lens and the automated defect detection used in high-tech manufacturing lines.

Recurrent Neural Networks (RNNs)

While CNNs excel at space, Recurrent Neural Networks are the masters of time. Traditional neural networks assume that all inputs are independent of each other. RNNs, however, are designed for sequential data where the “before” matters to the “after.”

An RNN has a “hidden state” that acts as a short-term memory. When it processes the second word in a sentence, it remembers what the first word was. This makes them ideal for speech recognition or predicting the next price point in a volatile stock market.

A major technical hurdle for standard RNNs is that they forget the beginning of long sequences. To fix this, researchers developed Long Short-Term Memory (LSTM) units and Gated Recurrent Units (GRUs), which use “gates” to decide which information is worth keeping and what should be discarded.

Transformers: The Shift to Attention

In 2017, a research paper titled Attention Is All You Need changed the world. It introduced the Transformer architecture, which has largely superseded RNNs for language tasks.

Unlike RNNs, which read text from left to right, Transformers look at the entire sentence at once. They use a mechanism called Self-Attention to weigh the importance of every word in relation to every other word. For example, in the sentence “The animal didn’t cross the street because it was too tired,” a Transformer mathematically links the word “it” to “animal” with a high weight, whereas an older model might have struggled to know if “it” referred to the street or the animal.

Because they process data in parallel rather than in a sequence, Transformers can be trained on much larger datasets, which is why they are the foundation for GPT-4, Claude, and Gemini.

Reinforcement Learning (RL) and Deep RL

Reinforcement Learning is fundamentally different because it does not rely on a static dataset of correct answers. Instead, it is a framework of Agent, Environment, and Reward.

An AI agent is placed in a digital environment (like a video game or a power grid simulator) and allowed to take actions. If an action leads to a goal, the agent receives a positive reward. If it fails, it receives a penalty.

Deep Reinforcement Learning uses a neural network to help the agent decide which action to take next. This allowed DeepMind’s AlphaZero to learn the game of Chess in just four hours to a level that surpassed all previous human and computer knowledge.

This is now used to optimize the cooling systems in massive data centers, saving millions of dollars in electricity by constantly “playing” with the thermostat to find the perfect efficiency.

Advantages of Deep Learning

The primary reason deep learning has replaced traditional statistical models in the enterprise is its ability to handle feature extraction automatically. In older systems, a human expert had to manually define what data points were important—a process that was both slow and prone to error. Deep learning removed this bottleneck by allowing the neural network to determine which characteristics of the data matter most for a specific goal.

This architectural shift leads to several distinct benefits that allow the technology to outperform almost any other mathematical approach.

- Maximum Utilization of Unstructured Data Most of the world’s data is messy and consists of raw images, social media posts, audio files, and video streams. Traditional databases and machine learning models struggle with this format, but deep learning thrives on it. By using layers of filters, organizations can find hidden correlations in unstructured data that were previously invisible to software.

- Unmatched Scalability with Big Data Standard machine learning models eventually hit a performance ceiling where adding more data no longer makes them smarter. Deep learning models are arguably the only algorithms that scale linearly with information and compute power. As long as you provide high-quality data and massive GPU resources, the model’s accuracy continues to climb.

- End-to-End Learning Efficiency In a deep learning pipeline, the same model handles the input, the feature discovery, and the final classification. This integrated approach reduces the need for multiple fragmented software systems, creating a more streamlined and reliable path from raw data to an actionable insight.

Challenges and Limitations of Deep Learning

Despite its transformative power, deep learning is not a magic bullet. It comes with significant costs and technical hurdles that can limit its application for smaller organizations or highly sensitive industries. One of the most significant barriers is the massive data hunger required to reach human-level accuracy. For specialized fields like rare disease research or niche languages, gathering enough high-quality, labeled data is often the biggest obstacle to success.

Beyond the data requirements, there are several inherent risks that engineers and stakeholders must manage.

The high computational and environmental costs are impossible to ignore. Training a state-of-the-art model like GPT-4 or Llama 3 requires thousands of high-end GPUs running for months. This consumes an enormous amount of electricity and requires a massive financial investment in hardware, making the cost of compute the largest single expense for most AI startups.

Another critical issue is the Black Box Problem, where it becomes nearly impossible to trace the exact logic behind a single output. Because a neural network might have billions of internal weights, the lack of transparency is a major concern in high-stakes fields like banking, criminal justice, and medical surgery.

Finally, the susceptibility to bias remains a constant threat. If the training data contains human prejudices, the model will learn and amplify them. If a facial recognition model is trained mostly on one demographic, it will perform poorly on others. This has led to a major global push for AI Ethics and more diverse, representative datasets to ensure fairness in automated decision-making.

In a real-world production environment, it is often found that debugging a deep learning model is significantly harder than traditional code. When a standard software program fails, there is usually a clear line of broken logic, but when a neural network fails, it often fails silently, producing confident but incorrect results that require deep statistical forensics to uncover.

How to Start Learning Deep Learning

Entering this field requires a shift from general programming to mathematical engineering. It is a journey that moves from writing lines of code to designing the flow of information through a complex system of layers.

Skills You Need

You do not need to be a theoretical mathematician, but you do need to understand the language of data. Professional practitioners typically focus on three core areas to build their foundation.

- Programming Proficiency: Python is the undisputed king of deep learning. You must be comfortable with libraries like NumPy for vector math and Pandas for data manipulation.

- Applied Mathematics: You should have a foundational grasp of Linear Algebra to understand how data moves in matrices and Calculus to understand how backpropagation calculates error through the chain rule.

- Data Intuition: You must learn how to clean and preprocess data. A great model with bad data will always fail, a concept known in the industry as Garbage In, Garbage Out.

Tools and Frameworks

In the professional world, almost everyone uses one of two major open-source frameworks to build and deploy their networks.

PyTorch Developed by Meta’s AI Research lab, PyTorch is the favorite of the academic and research community. It is known for being Pythonic and flexible, making it significantly easier to debug and experiment with new, unproven architectures.

TensorFlow and Keras Developed by Google, TensorFlow is often the choice for industrial-scale deployment and mobile applications. It is highly stable and pairs with Keras, a high-level API that allows you to build a fully functional neural network in just a few lines of code.

Beginner Learning Roadmap

To move from a curious beginner to a capable practitioner, you must follow a structured progression that builds on existing knowledge.

- Master Python Basics: Focus on the logic of data structures and object-oriented programming.

- Learn Classical Machine Learning: Understand basic concepts like Linear Regression, Random Forests, and Overfitting using Scikit-Learn.

- Study Neural Network Fundamentals: Learn to calculate the Forward Pass and Backpropagation manually on paper before ever touching a coding framework.

- Specialization: Choose a specific path based on your interests. If you like images, study Computer Vision. If you prefer text and language, dive into Transformers and LLMs.

- Build and Deploy: Do not just follow tutorials. Build a unique project, host it on GitHub, and try to optimize its performance on a real-world dataset from a platform like Kaggle.

Beginner Project Ideas in Deep Learning

The most effective way to learn is by solving a problem that interests you. Instead of just following a tutorial, try to build a project where you have to handle messy data or optimize a model for a specific result.

- Image Classification with CIFAR-10

This is a classic starting point. You will build a Convolutional Neural Network (CNN) to identify objects like airplanes, cars, and birds in a dataset of 60,000 small images. It teaches you how to handle image preprocessing and layer stacking. - Sentiment Analysis on Movie Reviews

Using a dataset from IMDb, you can build a Recurrent Neural Network (RNN) or a simple Transformer to determine if a review is positive or negative. This project introduces you to natural language processing and word embeddings. - Handwritten Digit Recognition (MNIST)

Often called the “Hello World” of deep learning, this project involves training a network to recognize handwritten numbers. It is an excellent way to see how the mathematical “weights” in a network begin to identify shapes and curves. - Generating Human Faces with DCGANs

If you are interested in generative AI, try building a Deep Convolutional Generative Adversarial Network. You will train two networks against each other—one to create fake faces and one to spot them—until the computer can generate realistic human portraits from scratch.

In a professional setting, we often see that the best beginners are those who don’t just achieve a high accuracy score, but those who can explain why their model failed on certain edge cases. Documenting your failed experiments is often more valuable for your portfolio than the final successful code.

Future of Deep Learning

As we move through 2026, the field is shifting away from simply making models bigger and toward making them more efficient and autonomous. The brute force era of deep learning is being replaced by Agentic AI, where models don’t just answer questions but execute complex, multi-step tasks independently.

We are also seeing the rise of Edge AI. Instead of sending data to a massive cloud server, deep learning models are being optimized to run directly on hardware like smartwatches, drones, and medical devices. This requires a move toward Model Compression and Quantization, where engineers shrink a model’s size without sacrificing its intelligence.

Finally, Explainable AI (XAI) is becoming a legal and ethical necessity. The black box nature of deep learning is no longer acceptable in regulated industries. The future involves creating neural networks that can provide a “receipt” for their logic, explaining exactly which features led to a specific decision in a way that humans can audit and trust.

FAQs About Deep Learning

What is deep learning in simple words?

It is a type of artificial intelligence that mimics the way the human brain works to learn from experience. It uses “neural networks” to look at data—like photos or text—and find patterns without a human having to explain every rule.

Is deep learning the same as machine learning?

Not exactly. Deep learning is a specialized subset of machine learning. While standard machine learning often requires a human to help organize the data, deep learning can automatically discover the important features on its own.

What programming language is best for deep learning?

Python is the industry standard due to its massive ecosystem of libraries like PyTorch and TensorFlow. However, for high-performance systems or robotics, C++ and Rust are becoming increasingly popular for their speed and memory safety.

What are the main applications of deep learning?

It powers the world’s most advanced technology, including facial recognition on your phone, self-driving car navigation, real-time language translation, and generative tools like ChatGPT and Midjourney.

Which industries use deep learning the most?

Currently, Healthcare (for diagnostics), Finance (for fraud detection), Automotive (for self-driving systems), and Retail (for personalized recommendations) are the leading adopters.

What is the difference between neural networks and deep learning?

A neural network is the mathematical structure used to process data. Deep learning is the broader field that uses “deep” neural networks—meaning networks with many layers—to solve highly complex problems.

What tools are used in deep learning?

Most experts use PyTorch or TensorFlow as their primary framework. They also rely on NVIDIA GPUs for processing power and tools like Hugging Face to access pre-trained models for language and vision tasks.

What are some beginner projects in deep learning?

Starting with the MNIST digit classifier or a cat vs. dog image recognizer is highly recommended. Once you are comfortable, moving into stock price prediction with LSTMs or a simple chatbot is a great next step.

What skills are required to become a deep learning engineer?

You need a combination of strong Python coding skills, a foundational understanding of Linear Algebra and Calculus, and experience in data preprocessing. Familiarity with a framework like PyTorch and an understanding of how to train and deploy models are also essential for a career in the field.