Artificial intelligence (AI) is a field of computer science that builds systems capable of performing tasks that typically require human cognition, such as reasoning, problem-solving, and learning.

What is Artificial Intelligence?

Artificial Intelligence (AI) is the science of building computer systems that can reason, learn, and make decisions by processing data rather than following a rigid set of instructions. It essentially allows a machine to simulate human-like cognitive functions to solve problems and adapt to new information independently.

To understand artificial intelligence, you must look past the science fiction tropes and see it as a fundamental shift in how computers process information. In traditional computing, a human provides both the data and the explicit rules to get an answer. With AI, we provide the data and the desired outcome, and the system determines the mathematical rules itself.

This capability is built on several foundational pillars that define how a machine “thinks” in a digital environment.

- Pattern Recognition The ability to sift through millions of data points to find correlations that are invisible to the human eye, such as identifying a fraudulent credit card transaction in milliseconds.

- Heuristic Problem Solving Using trial and error to find the most efficient path to a goal, a technique essential for everything from logistics routing to mastering complex games like chess.

- Natural Language Understanding The process of decoding the nuances, context, and intent behind human speech, allowing a machine to respond to a prompt with relevant, human-like logic.

In a professional setting, AI is often categorized by its scope. Most systems today are Narrow AI, designed to excel at a single task like filtering spam or recommending a movie. The theoretical goal of the field remains Artificial General Intelligence, a system that could apply its intelligence to any problem just as a human does.

History and Evolution of AI

The journey of artificial intelligence is marked by cycles of intense optimism and periods of stagnation known as AI Winters. It began as a philosophical question before evolving into a rigorous mathematical discipline that now underpins the global economy.

Early AI (1950s–1970s)

The formal history of the field started in 1950 when Alan Turing published his landmark paper, Computing Machinery and Intelligence. He famously proposed the Turing Test, asking if a machine could behave so much like a human that a judge could not tell the difference. This era was defined by Symbolic AI, where researchers tried to map out human logic using complex if-then rules.

In 1956, the term Artificial Intelligence was officially coined at the Dartmouth Workshop by pioneers like John McCarthy and Marvin Minsky. These early systems could solve basic algebra and play checkers, but they lacked the raw processing power to handle the messy, unpredictable nature of the real world. This led to the first AI Winter in the 1970s, as funding dried up when the technology failed to meet the massive expectations of the time.

Researchers at this stage faced a fundamental wall, which was the lack of digitized data and memory. A computer in 1960 could not remember its past decisions to improve its future ones, which meant every task started from scratch. This era proved that while logic is important, intelligence cannot exist without a memory of past experiences.

Machine Learning Era (1980s–2010s)

By the 1980s, the focus shifted from teaching computers rules to letting them learn from data. This was the rise of Machine Learning. Instead of manually coding every possibility, engineers began using statistical models like Support Vector Machines and Decision Trees.

A major breakthrough occurred in 1986 when Geoffrey Hinton and his colleagues popularized Backpropagation, a method for training neural networks by correcting errors in their output. However, the technology was still ahead of its time. It wasn’t until the late 1990s and early 2000s, when the internet provided massive datasets and processors became faster, that machine learning began to dominate industry applications like search engines and early recommendation systems.

The shift during this period can be summarized by three major changes in the landscape.

- The Availability of Data: The explosion of the web gave models billions of examples to study.

- Moore’s Law: Hardware finally became small and fast enough to run complex statistical calculations.

- Algorithmic Refinement: Techniques like Random Forests and Boosting allowed for much higher accuracy in predictions.

This era proved that the key to intelligence was not just better logic, but better data. By 1997, IBM’s Deep Blue defeated world chess champion Garry Kasparov, signaling that machines were beginning to outperform humans in highly structured, strategic domains.

Deep Learning Breakthroughs (2010s–2020s)

The 2010s marked the Deep Learning Revolution, fueled by a perfect storm of Big Data and the discovery that NVIDIA GPUs could speed up math calculations by a factor of a hundred. In 2012, a model called AlexNet crushed the competition in an image recognition contest, proving that deep neural networks with many layers were the future.

This decade saw the birth of the Transformer architecture in 2017, which allowed models to understand the relationship between words in a sentence simultaneously rather than one by one. This led directly to the creation of Large Language Models and changed the way humans interact with technology forever.

The impact of this era was seen across multiple sectors almost immediately.

- Computer Vision: Machines achieved better-than-human performance in identifying objects and faces.

- Voice Search: Assistants like Siri and Alexa moved from simple commands to understanding complex requests.

- Strategic Games: AlphaGo defeated Lee Sedol in 2016, a feat many experts thought was still decades away.

Deep learning removed the human bottleneck of feature engineering, meaning the machine could now decide for itself which parts of the data were most important to its success.

AI Revolution Today

As we move through 2026, we have transitioned from the era of chatbots to the era of Agentic AI. Intelligence is no longer a separate tool, it has become a fundamental layer of global infrastructure. Modern AI systems are now multimodal, meaning they can see, hear, and speak at the same time. We are seeing a shift toward Edge AI, where complex models run locally on devices like smartwatches and medical sensors rather than relying on a distant cloud server.

The current landscape is defined by the move toward Action-Oriented AI. In the past, you might ask an AI to write a plan. Today, you ask the AI to execute the plan, which might involve browsing the web, using software, and coordinating with other systems. This transition marks the point where AI moves from being a reactive responder to an active participant in our daily lives.

This level of integration is supported by new developments in Retrieval-Augmented Generation (RAG), which allows models to access real-time, private information safely. We are also seeing a massive push into Sovereign AI, where nations are building their own infrastructure to ensure data privacy and cultural relevance in their language models.

Core AI Technologies

The architecture of an AI system is dictated by the type of data it needs to master. Whether it is a string of text, a digital image, or a sequence of financial transactions, the underlying technology must be tuned to find the signal within that specific noise.

Machine Learning (ML)

Machine Learning is the engine that drives most of the automated decisions we encounter daily. At its heart, it is a statistical approach that allows a system to improve its performance on a specific task through experience. Instead of a programmer writing a rigid code for if this happens, then do that, they provide a learning algorithm and a dataset.

This technology relies on several distinct learning styles to achieve its goals.

- Supervised Learning The model is trained on labeled data, meaning it is given the correct answers during its “study” phase. This is how email filters learn to identify spam by looking at millions of messages already marked as junk by humans.

- Unsupervised Learning The system is given raw data without any labels and must find its own patterns or groupings. Retailers use this to “cluster” customers into different segments based on buying habits they didn’t even know existed.

- Reinforcement Learning The algorithm learns through a system of rewards and penalties. It is the foundation for robotics and game-playing AI, where the agent tries millions of different moves to see which one leads to the highest score.

Deep Learning

Deep learning is a more advanced evolution of machine learning that uses artificial neural networks to solve highly complex problems. The word deep refers to the many layers through which data must pass before a result is reached. Each layer filters the information, becoming increasingly abstract as it moves toward the final output.

What makes this technology unique is its ability to handle unstructured data like raw audio or uncompressed video. In standard machine learning, a human often has to clean the data first. In deep learning, the model performs its own feature extraction. If you show a deep learning model a million photos of cars, it eventually figures out the concept of a wheel or a windshield on its own, without a human ever defining those shapes.

Natural Language Processing (NLP)

Natural Language Processing is the bridge between human communication and computer code. It allows machines to read, decipher, and understand the intent behind the way people speak and write. NLP is not just about identifying words; it is about understanding context, sentiment, and the subtle nuances of grammar.

This field has moved from simple keyword matching to complex semantic understanding through several key processes.

- Tokenization: Breaking a sentence down into individual pieces or tokens.

- Sentiment Analysis: Determining if a piece of text is angry, happy, or neutral.

- Named Entity Recognition: Identifying specific people, places, or dates within a block of text.

Large Language Models (LLMs)

Large Language Models are massive neural networks trained on almost the entirety of human written knowledge. Unlike basic NLP tools, an LLM like GPT-4 or Gemini does not just analyze text; it predicts the most statistically likely next word in a sequence. Because these models are trained on trillions of words, they develop a probabilistic understanding of logic, coding, and creative writing.

The real power of an LLM comes from its scale. When a model reaches a certain size, it begins to show emergent properties, such as the ability to translate between languages it wasn’t explicitly taught or to solve mathematical word problems by “thinking” through the steps.

Generative AI

Generative AI is a shift from machines that analyze to machines that create. While traditional AI was used to categorize existing data, generative models use their training to synthesize entirely new content. This includes text, hyper-realistic images, music, and even synthetic voices.

This technology primarily uses two types of architectures. Generative Adversarial Networks (GANs) pair two models against each other, one creates an image and the other tries to spot the fake until the results are indistinguishable from reality. Diffusion Models work by taking a noisy, blurry image and slowly denoising it until a clear, high-resolution picture emerges based on a text prompt.

Predictive AI

While generative AI looks at what could be, Predictive AI focuses on what will likely happen. It uses historical data to forecast future events with a high degree of mathematical certainty. This is the crystal ball of the corporate world, used to anticipate everything from a spike in electricity demand to a potential mechanical failure in a jet engine.

Industries rely on this for risk management and optimization. In supply chain logistics, predictive AI can look at weather patterns, port congestion, and fuel prices to tell a company exactly when they should ship their products to avoid delays.

Computer Vision & Robotics

Computer Vision is the technology that gives machines eyes. It involves training computers to interpret and understand the visual world. By using digital images from cameras and videos, models can accurately identify and classify objects, and then react to what they see.

When combined with Robotics, this creates autonomous systems capable of navigating physical space.

- Sensor Fusion: Combining data from cameras, LiDAR, and radar to build a 360-degree map of the environment.

- Path Planning: Calculating the safest and fastest route for a robot to move from point A to point B without hitting obstacles.

- Actuation: Converting digital decisions into physical movement, such as a robotic arm picking up a fragile piece of fruit without crushing it.

Properties and Capabilities of AI

The defining characteristic of artificial intelligence is not its speed, but its ability to handle “fuzzy” logic. Traditional software is binary, which can be either right or wrong based on a fixed rule. AI, however, operates in probabilities.

One of its most vital properties is Adaptability. An AI system can adjust its internal weights based on new data, meaning it gets smarter the more it is used. This leads to Autonomy, where the system can make complex decisions without human intervention. Whether it is an AI agent managing a stock portfolio or a self-driving car navigating a rainy highway, the capability to assess a situation and act independently is what separates true AI technology from simple automation.

Finally, we are seeing the rise of Reasoning Capabilities. Modern AI can now follow a chain of thought, breaking a large problem into smaller pieces to reach a logical conclusion. This moves the technology away from being a simple search engine and toward being a cognitive partner that can assist in scientific discovery and complex engineering.

Top Applications Across Industries

The versatility of AI technology allows it to solve unique problems in almost every sector. Whether it is analyzing a biological sequence or a global supply chain, the underlying ability to find patterns remains the primary value driver.

Healthcare

Artificial intelligence has fundamentally changed the speed of medical discovery. In drug discovery, models simulate how billions of chemical compounds interact with human proteins. This reduces the time to find new medications from years to weeks.

Radiology is also seeing massive shifts. AI algorithms now act as a “second set of eyes” for doctors. These systems flag anomalies in X-rays, MRIs, and CT scans with extreme precision. In many cases, they even surpass human experts in detecting early-stage cancer.

Beyond diagnostics, personalized medicine uses a patient’s genetic data to tailor treatment plans. This ensures that every therapy is mathematically optimized for an individual’s unique biology.

Finance & Trading

The financial sector relies on AI for its speed and its ability to manage risk in volatile markets. Algorithmic Trading systems execute millions of trades per second, reacting to global news and price shifts faster than any human floor trader could.

- Fraud Detection: Banks use machine learning to analyze spending patterns in real-time, instantly blocking a transaction if it deviates from a user’s historical behavior.

- Credit Scoring: AI looks beyond traditional bank statements to assess creditworthiness, allowing for more inclusive lending based on a wider range of financial data points.

- Robo-Advisors: Automated platforms now manage billions in assets, providing personalized investment advice based on a user’s risk tolerance and long-term goals.

Retail & E-Commerce

In the world of online shopping, AI is the invisible hand that guides every click. Hyper-personalization engines analyze your browsing history, the time of day you shop, and even your scroll speed. This data helps the system suggest products you are likely to buy.

Retailers like Amazon use predictive AI to manage anticipatory shipping. These systems move products to a local warehouse before a customer even hits the buy button. On the front end, visual search allows users to snap a photo of a real-world item. Within seconds, the AI finds the exact product or a close match online.

Human Resources & Recruitment

Recruitment has transformed from a manual resume review to a data-driven science. AI tools now sift through thousands of applications to find the “best fit” candidates based on specific skill sets and cultural markers.

Many HR departments use predictive analytics to identify flight risks. These systems flag employees who may be planning to leave the company, allowing management to intervene with retention strategies early. While this increases efficiency, it has sparked a major debate about algorithmic bias. If hiring software is trained on historical data that lacks diversity, the results can be inaccurate or unfair.

Virtual Assistants & Administrative AI

The days of simple voice commands are over. Modern virtual assistants like Siri, Alexa, and dedicated enterprise agents are now capable of handling multi-step logic. They can schedule meetings across different time zones, summarize long email threads, and even draft initial replies.

In the corporate world, Robotic Process Automation (RPA) handles the digital paperwork. These systems automatically transfer data between platforms and file reports. This shift frees up human workers to focus on more strategic, high-value tasks.

Media, Marketing & Creativity

Generative AI has completely disrupted the creative landscape. Marketing teams now use tools like Midjourney and Claude to build entire ad campaigns in a fraction of the usual time. These systems handle everything from the initial copy to high-resolution imagery.

In the film and gaming industries, AI now powers neural rendering. This technology creates hyper-realistic textures and environments that once required months of manual 3D modeling. Even musicians use AI to assist in composition. Some models suggest melodies, while others turn a simple piano riff into a complex orchestral arrangement.

Challenges and Risks of AI

As the reach of AI expands, so do the potential points of failure. These risks are not just technical bugs; they are structural challenges that can have real-world consequences for privacy, safety, and the law.

Data Risks

AI is only as good as the information it consumes. Data Poisoning is a growing threat where malicious actors feed “bad” data into a model during its training phase to create backdoors or bias the results. Furthermore, the massive collection of personal information required for modern AI raises significant Privacy Concerns. If a model remembers a piece of sensitive user data, that information could potentially be extracted through a clever prompt, leading to a massive security breach.

Model Risks

Even the most advanced models suffer from inherent flaws. Hallucination remains a primary issue, where a Large Language Model provides a false answer with total confidence. There is also the problem of Model Drift, where a system that was highly accurate on day one slowly becomes less reliable as the real-world data it encounters begins to change.

In high-stakes environments like medicine or autonomous driving, a 1% error rate can be catastrophic. The “Black Box” nature of these models makes it difficult to audit why a mistake happened, creating a crisis of trust between the technology and its users.

Operational Risks

The infrastructure required to run global AI is fragile and expensive. The Energy Consumption of massive data centers is a major environmental hurdle, with a single training run for a top-tier model consuming as much electricity as thousands of homes.

There is also the risk of Over-Reliance. As businesses automate more of their core logic, they lose the institutional knowledge of how to perform those tasks manually. If the AI system goes offline or experiences a glitch, the entire organization can grind to a halt because no human remains who understands the underlying process.

Ethical and Legal Risks

The legal landscape is currently struggling to keep pace with AI development. Intellectual Property is a major battleground, as artists and writers sue AI companies for training models on their copyrighted work without permission.

Beyond the law, there are deep ethical concerns regarding Algorithmic Bias. If an AI used for court sentencing or bank loans is trained on biased historical data, it will automate and scale that prejudice. There is also the existential question of Job Displacement, as AI becomes capable of performing not just blue-collar labor, but high-level cognitive work in law, accounting, and software engineering.

AI Ethics and Governance

The rapid deployment of intelligence has created a “regulatory lag” where technology moves faster than the law. Ethics in AI is no longer a theoretical debate for philosophers; it is a technical requirement for developers. At the center of this movement is Explainable AI (XAI), which aims to break the “black box” nature of neural networks. If an AI denies a loan or suggests a medical treatment, the system must be able to provide a human-readable “receipt” of its logic to ensure fairness and accountability.

Global governance is also taking shape through frameworks like the EU AI Act, which categorizes AI systems based on their risk levels. High-risk applications, such as biometric identification or critical infrastructure management, face strict transparency and security audits.

- Algorithmic Neutrality: Ensuring that training data does not reflect historical human prejudices.

- Data Sovereignty: Protecting the rights of individuals and nations to control how their digital footprints are used to train massive models.

- Human-in-the-Loop (HITL): A governance principle requiring that a human expert makes the final decision in high-stakes scenarios, using the AI only as a high-speed consultant.

Case Studies (Real-World Examples)

To see the true impact of AI, we must look at where it has solved problems that were previously thought to be impossible.

- AlphaFold (Biotechnology): For 50 years, predicting how a protein folds into its 3D shape was a “grand challenge” in biology. Google DeepMind’s AlphaFold used deep learning to predict the structures of nearly all 200 million proteins known to science. This has accelerated the development of new vaccines and plastic-recycling enzymes by decades.

- John Deere (Agriculture): Using computer vision and robotics, modern tractors now use “See & Spray” technology. AI cameras identify individual weeds in a field of crops in real-time, allowing the machine to spray only the weed and not the plant. This reduces herbicide use by up to 77%, saving money and protecting the environment.

- JPMorgan Chase (Legal/Finance): The bank implemented a program called COIN (Contract Intelligence). Using image recognition and NLP, it interprets commercial loan agreements that used to take legal teams 360,000 hours of manual labor per year. The AI now completes the same task in seconds with fewer errors.

Emerging Trends and the Future of AI

The Brute Force era of AI, characterized by simply making models larger, is reaching its limit. The next frontier is Efficiency and Autonomy. We are seeing the rise of Small Language Models (SLMs) that provide high-level intelligence while running on a fraction of the electricity, allowing for powerful AI to live directly on mobile hardware without cloud dependency.

We are also entering the age of Agentic Workflows. In the past, AI was a reactive tool—you asked a question, and it gave an answer. Future AI will be proactive. These agents will have the ability to use software, browse the web, and collaborate with other AI agents to complete complex, multi-day projects like planning a corporate event or coding an entire application from a single prompt.

Finally, Biological Computing is beginning to merge with AI research. Scientists are looking at the energy efficiency of the human brain to design Neuromorphic chips that process information using 10,000 times less power than current silicon-based GPUs.

FAQ Section (SEO-Friendly)

What is AI in simple terms?

Artificial intelligence is a branch of computer science that builds software capable of learning and making decisions like a human. Instead of following a rigid set of pre-written rules, it looks at data to find patterns and solve problems on its own.

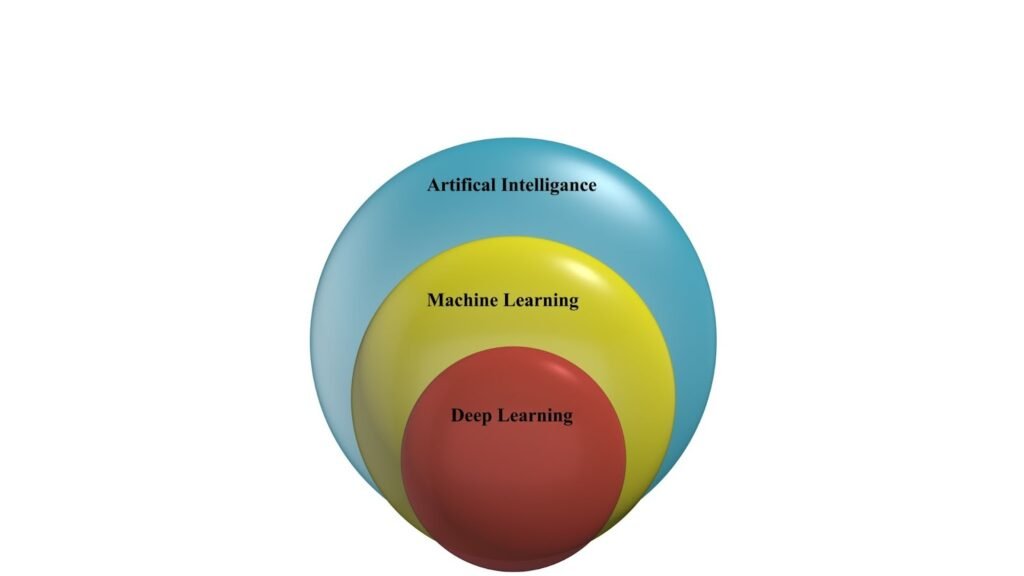

Difference between AI, ML, and Deep Learning?

Think of them as nesting dolls. AI is the broad concept of machines acting smart. Machine Learning (ML) is a specific way to achieve AI by letting machines learn from data. Deep Learning is the most advanced version of ML, using many-layered “neural networks” to handle complex tasks like speech and vision.

How do LLMs like GPT work?

Large Language Models work by predicting the most likely next word in a sequence. They aren’t “thinking” in the human sense; they are using massive statistical maps built from trillions of pages of human text to generate logical, context-aware responses.

Real-world AI applications across industries?

AI is used in Healthcare for diagnostics, Finance for fraud detection, Retail for personalized shopping, and Manufacturing for predictive maintenance on heavy machinery.

What are the ethical concerns with AI?

The main concerns include algorithmic bias (AI making unfair decisions), privacy (the use of personal data for training), and the Black Box problem (the difficulty of understanding how an AI reached a specific conclusion).

Can AI replace human jobs entirely?

While AI will automate many repetitive and data-heavy tasks, it is unlikely to replace jobs that require deep empathy, complex social negotiation, or high-level ethical judgment. Most experts believe AI will change how we work rather than eliminating work entirely.

How can AI be adopted responsibly by businesses?

Businesses should focus on transparency, ensuring they tell users when they are interacting with an AI. They must also implement rigorous data auditing to prevent bias and keep a “human in the loop” for all high-stakes decisions.